Quick Take

- Launch: April 21, 2026 — across ChatGPT (web, app), Codex, and via API

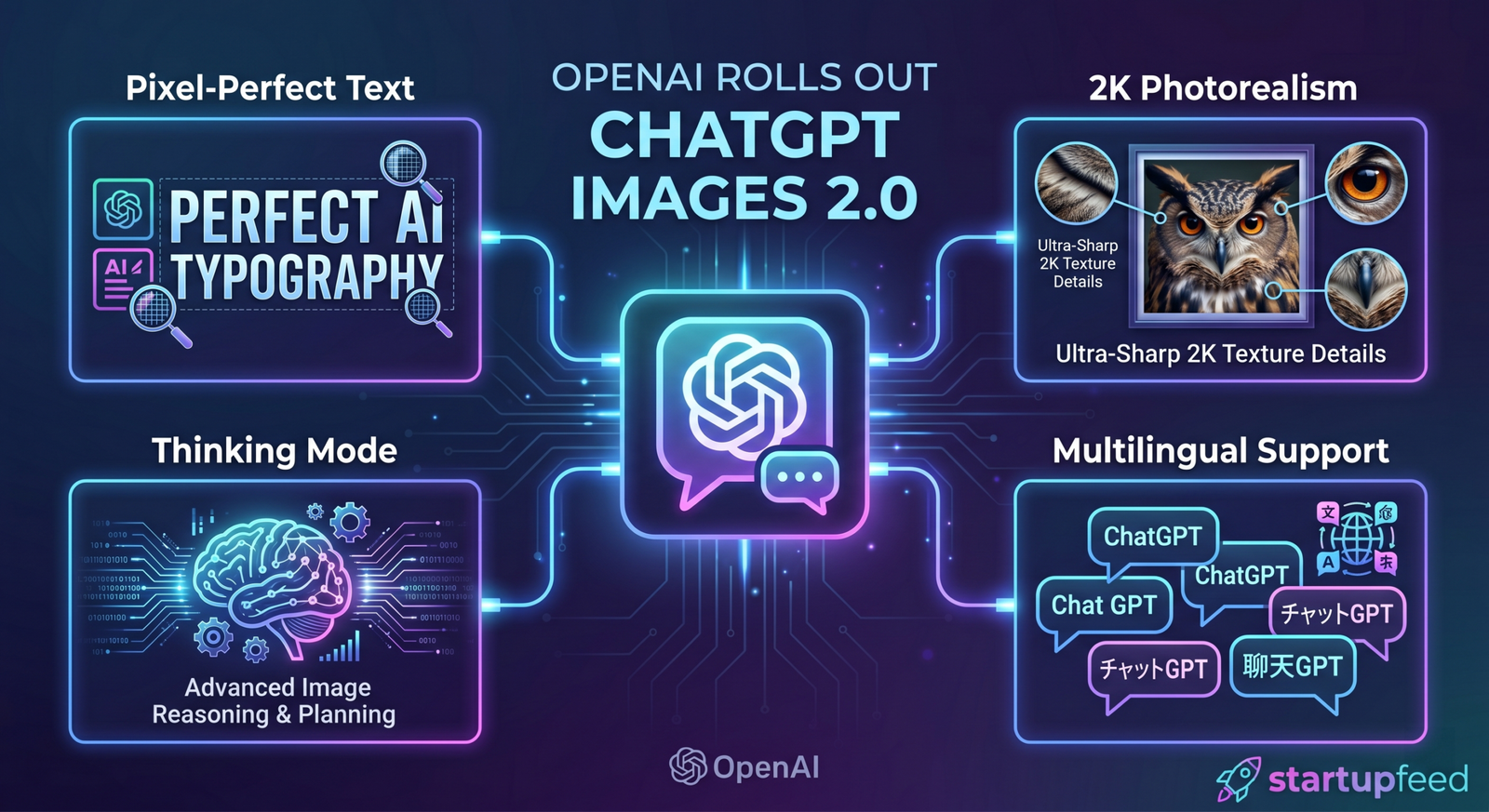

- Model: ChatGPT Images 2.0 (API name: gpt-image-2); architecture ‘revamped from scratch’ (Research Lead Boyuan Chen); knowledge cutoff December 2025

- Headline upgrade: Pixel-perfect text rendering — small text, iconography, UI elements, dense compositions, infographics, posters, menus, magazine covers; previously a fundamental failure point for all diffusion models

- Two modes: Instant (all users — fast, strong quality) + Thinking (paid: Plus, Pro, Business — reasons before generating; web search; up to 8 consistent multi-image outputs; multi-frame character consistency)

- Multilingual: High-fidelity non-Latin script rendering — Hindi, Bengali, Japanese, Korean, Chinese; text renders coherently integrated into design, not just translated

- Formats: Up to 2K resolution; aspect ratios 3:1 to 1:3; up to 10 images per prompt (8 in Thinking mode with consistency); selective area editing; conversational refinement

- Pricing: API: $0.006/image (low, 1024×1024) → $0.053 (medium) → $0.211 (high); up to $0.41/image at 4K on third-party platforms; thinking mode reserved for paid tiers

- Competition: Directly rivals Google’s Nano Banana 2 (Gemini 3 Pro Image, Feb 2026) — the only other model with comparable dense text capabilities ‘baked in’

OpenAI launched ChatGPT Images 2.0 on April 21, 2026, describing it as a ‘step change’ in what AI image generation can do. The update is not an incremental improvement — the underlying architecture was rebuilt from scratch. The new model, available across ChatGPT, Codex, and the developer API (as gpt-image-2), is designed to move AI-generated images from a creative novelty into a production-grade tool for professional design, marketing, education, and software development workflows.

Images are a language, not decoration. A good image does what a good sentence does — it selects, arranges, and reveals. It can explain a mechanism, stage a mood, test an idea, or make an argument.” — OpenAI, April 21, 2026

What’s Actually New — The Six Core Upgrades

| Upgrade | What Changed | Why It Matters |

| Text Rendering | Pixel-perfect typography inside images — small text, iconography, UI elements, dense compositions, legends, labels, menu items, poster copy. Previous diffusion models hallucinated letters; gpt-image-2 renders actual words correctly. | This single change moves AI image generation from ‘creative exploration’ to ‘production asset.’ A designer can now use this for real deliverables — menus, infographics, posters, banners — without spending hours fixing garbled text in Photoshop. |

| Thinking Mode | A reasoning-first generation process where the model plans before it creates. Includes web search for real-time accuracy; character consistency across multiple frames; multi-image outputs from a single prompt (up to 8 in Thinking mode); double-checks its own outputs. | Game-changer for sequential content (manga, storyboards, multi-scene designs) and for accuracy-dependent outputs (maps, educational diagrams, product mockups with current branding). Previously impossible with one-shot diffusion generation. |

| Photorealism at 2K | Images up to 2,048 pixels wide; quality-first architecture described as ‘state-of-the-art photorealism’; finer, more realistic images where details don’t appear artificial. | 2K resolution means output is print-ready without upscaling. Photorealism means brand photography mockups, product shots, and editorial images can now be generated without a studio shoot for concept validation. |

| Multilingual Non-Latin Script | High-fidelity text generation in Japanese, Korean, Chinese, Hindi, and Bengali — text ‘rendered correctly with language that flows coherently,’ not just translated. Text is natively integrated into the design. | Critical for India, East Asia, and MENA markets. An Indian brand can now generate marketing assets with accurate Devanagari text directly, without a separate localisation step. A Japanese publisher can auto-generate manga panels with readable Kanji. |

| Flexible Formats and Aspect Ratios | Aspect ratios from 3:1 (ultra-wide) to 1:3 (ultra-tall); up to 10 images per single prompt; batch generation with consistent visual style or deliberate variation for A/B testing. | This matches how real creative workflows operate — social media, OOH, banners, and print all have different aspect ratios. The ability to batch-generate all variants from one prompt compresses days of creative work. |

| Conversational Editing | Users can refine images through natural language conversation — zoom in, adjust elements, change compositions, selective area edits — without restarting. The model retains context across edits. | This is the ‘Photoshop alternative’ moment. Non-designers can now iterate on visuals without learning new software. Designers can use natural language for rough passes before fine-tuning in traditional tools. |

Why This Matters Specifically for India

ChatGPT Images 2.0 has a set of capabilities that are particularly relevant to India’s large and growing digital economy — and specifically to Indian marketers, designers, developers, and content creators:

- Hindi and Bengali text rendering is real now: India’s language-first internet is built on Devanagari, Bengali, Tamil, and Gujarati scripts — not Latin characters. For the first time, an AI image model can generate marketing assets, educational materials, and social media graphics with correctly rendered Hindi and Bengali text. This is a direct unlock for India’s 600+ million vernacular internet users.

- Indian brands can generate localised assets at scale: A single prompt can now produce a product poster with accurate Devanagari text, an educational infographic with Bengali labels, or a social media graphic with mixed Hindi-English typography — all at production quality. This compresses what previously required a localisation agency into a single ChatGPT session.

- Indian EdTech and D2C brands are the immediate beneficiaries: EdTech companies building content for Hindi, Bengali, and regional language learners can now generate textbook-quality diagrams with correct local script. D2C brands can generate product photography with accurate label text without a studio shoot for every SKU.

- Indian developers get API access: The gpt-image-2 API, available from launch, means Indian SaaS companies and startups can embed production-quality image generation into their products without building their own models. At $0.053 per medium-quality image, the cost is viable for commercial applications.

- The competition for Indian creative professionals: This release — combined with similar capabilities in Google’s Nano Banana 2 — means that rote creative production work (banner variations, infographic localization, social media templates) will increasingly be automated. Indian creative agencies that haven’t built AI-first workflows are now under direct competitive pressure.

Thinking Mode — The Feature That Changes Professional Use Cases

The distinction between Instant and Thinking mode is the most architecturally significant change in Images 2.0. Most AI image generators are one-shot systems: you write a prompt, the model generates an image. Thinking mode changes this fundamentally:

| Capability | Instant Mode | Thinking Mode |

| Access | All ChatGPT users (free + paid) | ChatGPT Plus, Pro, Business only |

| Generation approach | Fast, single-pass generation | Reasons before generating; slower but more accurate |

| Web search | No — relies on training data | Yes — can search the web for real-time accuracy |

| Multi-image output | Up to 10 images per prompt | Up to 8 images with character/style consistency across all |

| Character consistency | Limited — each image generated independently | Full consistency across frames — same character, same lighting, same style |

| Self-verification | No | Yes — double-checks its own outputs before delivering |

| Use cases | Quick mockups, social media graphics, creative exploration | Manga/comics, storyboards, educational diagrams, brand campaigns, technical documentation |

| Best for | Speed-first creative work | Accuracy-first professional production |

Research Lead Boyuan Chen described Thinking mode as moving image generation ‘from rendering to strategic design, from a tool to a visual system.’ The practical demonstration: In one demo, the model scanned social media reactions to earlier test outputs, summarised the insights visually, and produced a QR code linking back to ChatGPT — all in a single loop combining reasoning, web research, and design generation.

The AI Image Generation War — Where Images 2.0 Fits

| Model / Company | Launch | Key Strength | vs Images 2.0 |

| ChatGPT Images 2.0 (OpenAI) | April 21, 2026 | Text rendering, Thinking mode, conversational editing, multilingual, 2K resolution | The benchmark being set — state-of-the-art for professional production use |

| Nano Banana 2 / Gemini 3 Pro Image (Google) | February 2026 | Dense text ‘baked in’ — the only other model with comparable text capabilities; strong on maps and complex diagrams | Comparable on text and educational diagrams; Google has stronger search integration; OpenAI wins on conversational editing and Thinking mode |

| Midjourney v7 | 2025 | Exceptional artistic quality and aesthetic control; preferred by artists | Weaker on text rendering; no native web search; less useful for professional production workflows |

| Stable Diffusion 4 (Stability AI) | 2025 | Open-source; local deployment; highest customisability | Much weaker on text; no reasoning; strongest when fine-tuned for specific styles |

| DALL-E 3 (Previous OpenAI) | 2023 | Creative flexibility; ChatGPT integration | Directly replaced by Images 2.0 — significantly worse on text, resolution, and instruction following |

| Adobe Firefly 4 | 2025 | Enterprise-grade copyright safety; Adobe Creative Cloud integration | More conservative output quality; strongest for enterprise brand safety compliance |

StartupFeed Insight

Text rendering is the unlock that changes everything: Every previous AI image model failure for professional use — menus with wrong spellings, infographics with garbled text, posters with invented words — traced back to the text rendering problem. Diffusion models inherently struggled with text because they didn’t ‘understand’ language as structure. gpt-image-2’s rebuilt architecture solves this. The consequence is not incremental — it shifts the entire category from ‘creative exploration’ to ‘production pipeline replacement.’

The Indian language support is underappreciated: Of all the Images 2.0 capabilities, the Hindi and Bengali rendering may have the largest practical impact in India. India’s ₹750+ Bn digital advertising market creates enormous demand for localised creative assets. Agencies currently charging for localisation of AI-generated English assets into Devanagari or Bengali will face pricing pressure immediately. Indian startups building multilingual content tools have 6-12 months before this becomes the default expectation.

Thinking mode vs Instant mode is really professional vs consumer: OpenAI’s access structure (Thinking mode for paid tiers only) is a deliberate monetisation strategy. The free tier gets a powerful tool; the paid tier gets the tool that can replace a mid-range design contractor. At Plus pricing (~$20/month), Thinking mode’s multi-image consistency and web search make it ROI-positive for any business producing regular creative content.

The ‘images are a language’ frame is strategic: OpenAI’s philosophical positioning — ‘images are a language, not decoration’ — signals that they see image generation as a modality for knowledge communication, not just aesthetics. The educational diagrams, technical documentation, and infographic capabilities of Images 2.0 are being positioned as productivity tools, not art tools. This is the framing that justifies enterprise pricing and moves the conversation from ‘AI art’ to ‘AI communication infrastructure.’

The viral moment prediction: OpenAI product manager Adele Li said during the launch briefing: ‘We believe that we are going to have another moment here.’ The reference was to the Studio Ghibli-style viral moment from earlier model releases. Images 2.0’s text rendering ability — specifically, the ability to generate convincing fake screenshots, menus, documents, and UI mockups — is both the most impressive demonstration capability and the most concerning from a misinformation standpoint. The viral moment will likely be a category that raises editorial and regulatory flags.

Our prediction: Indian D2C brands and EdTech companies will be the fastest adopters of gpt-image-2 API for multilingual creative generation. By Q4 2026, at least 3 Indian SaaS companies will have built gpt-image-2-powered localisation products specifically targeting Devanagari and Bengali script markets. Midjourney will release a text-rendering update within 60 days in direct response to Images 2.0.

What Images 2.0 Still Cannot Do — The Honest Caveats

- Precise physical reasoning: OpenAI explicitly notes that the model still struggles with highly detailed structural accuracy — complex 3D spatial relationships, intricate mechanical diagrams, and highly technical illustrations may require additional review.

- Extremely dense textures: Very detailed patterns and highly complex textures may lose fidelity. Not a replacement for professional technical illustration.

- Selective area edits can bleed: Region-selected edits can extend beyond the highlighted area — plan for at least one revision pass on precision edits.

- Knowledge cutoff December 2025: Thinking mode’s web search compensates, but time-sensitive content (recent logos, brand-new product SKUs, current events) needs to come through the prompt explicitly.

- Not a Photoshop replacement for precision: Conversational editing is powerful for rough iterations. For pixel-level precision, traditional tools remain necessary for final production.

- Speed trade-off in Thinking mode: More capability means slower output. Thinking mode takes longer — a trade-off that is worth it for professional work, but not for rapid creative exploration.

API Pricing — What Developers Need to Know

| Quality Tier | Resolution | Price per Image (API) |

| Low | 1024×768 | $0.006 (~₹0.50) |

| Medium | 1024×1024 | $0.053 (~₹4.40) |

| High | Unspecified (up to 2K) | $0.211 (~₹17.50) |

| 4K (third-party platforms) | 4096×4096 | $0.41 (~₹34) |

| API alias | chatgpt-image-latest | Tracks ChatGPT-parity — always the current production model |

At $0.053 per medium image, a startup generating 10,000 marketing assets per month pays $530 (~Rs 44,000) — significantly cheaper than a mid-level designer for equivalent output volume. The economic case for integrating gpt-image-2 into production creative pipelines is compelling even at high quality tiers.

ChatGPT Images 2.0 is not an incremental improvement. It is the release that ends the ‘AI images are for creative exploration’ era and begins the ‘AI images are for production work’ era. The text rendering alone — demonstrated by a correctly spelled, professionally laid-out Mexican restaurant menu that would have been impossible two years ago — represents a category shift.

For Indian founders, designers, marketers, and developers: the window to build on top of this capability is open. Hindi and Bengali text rendering, at production quality, available via API, at $0.05 per image — this is not a feature update. It is a new raw material for Indian digital commerce.