Quick Take

|

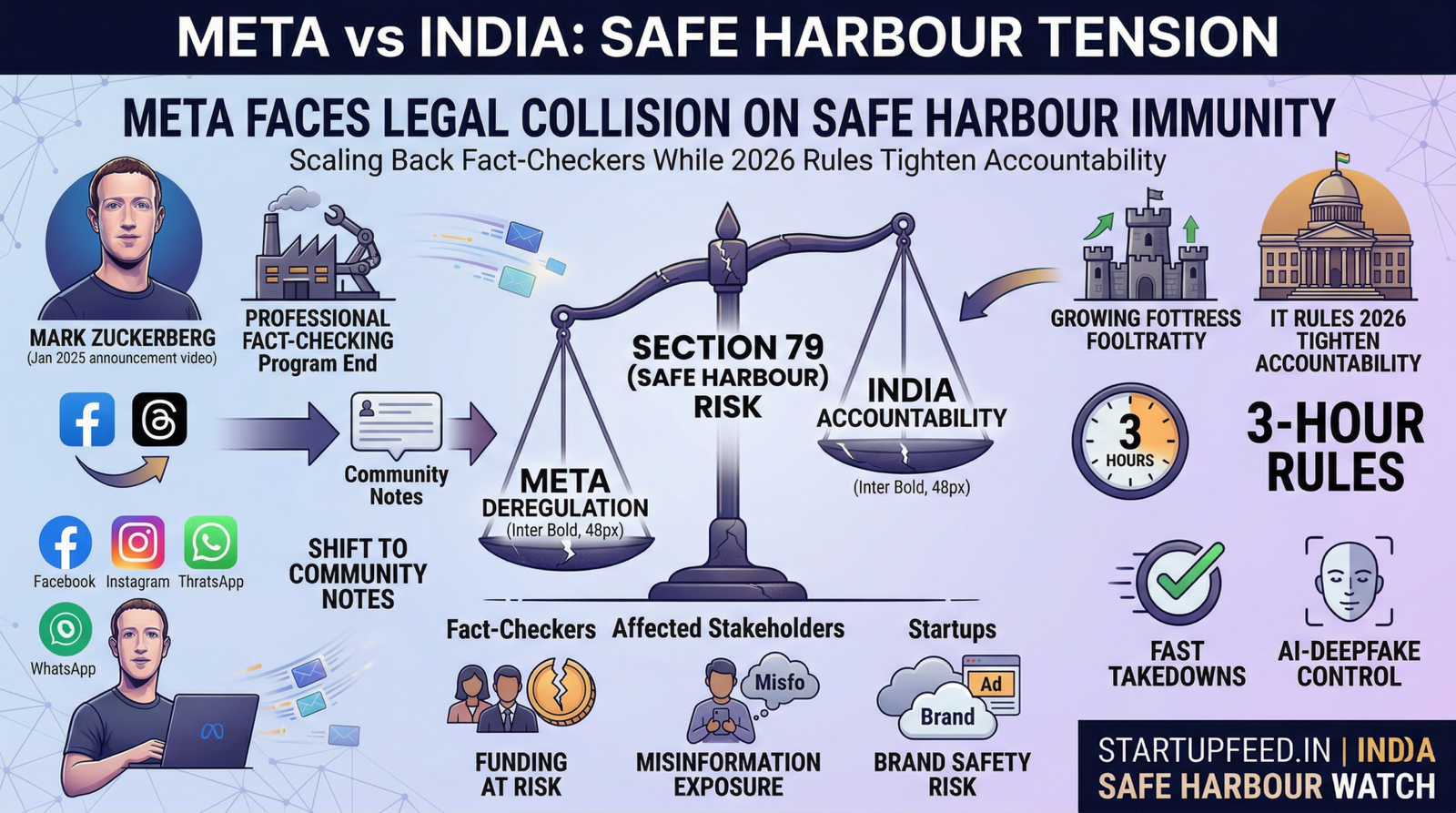

India is officially the largest market for Meta’s social media platforms — Facebook alone has over 3 billion users globally, most of whom are in India — but being the biggest does not mean being the safest. When Meta CEO Mark Zuckerberg announced in January 2025 that the company would dismantle its professional fact-checking programme and replace it with X-style Community Notes, the immediate focus was on the United States. The real story, argued by India’s fact-checking community, digital rights lawyers and MeitY policy watchers alike, is what it means for the 500 million-plus Indian users navigating Facebook, Instagram, Threads and WhatsApp — and for the legal framework that governs all of it.

Meta’s fact-checking retreat is not just a content policy story — it is a legal liability story. India’s Safe Harbour framework under Section 79 of the IT Act grants platforms immunity from liability for user-generated content on the condition that they maintain due diligence. The 2026 IT Amendment Rules have simultaneously raised that bar — mandating 3-hour takedowns and AI-deepfake controls. As Meta scales back its professional moderation infrastructure, it risks walking into a regulatory collision course: lighter internal safety spend, heavier external legal compliance demand. For India’s startup ecosystem, which depends on Meta’s ad platforms and its engagement algorithms for growth, the downstream consequences are both a governance risk and a business risk.

| StartupFeed Insight

What this means: India is at the centre of a global collision between platform deregulation (Meta’s direction) and platform accountability (India’s 2026 IT Rules). One side is pulling the safety net away. The other is making it legally mandatory. Platforms caught between the two face mounting Safe Harbour risk. Winners from Meta’s moderation rollback:

Losers from Meta’s moderation rollback:

Action required: Startups dependent on Meta’s ad ecosystem should audit brand safety controls immediately. Legal teams should monitor MeitY’s government advisory consultation (deadline April 14, 2026) — its outcome directly affects Safe Harbour compliance costs. |

What Meta Changed — and What Stayed the Same

On January 7, 2025, Mark Zuckerberg announced sweeping changes to Meta’s content governance in a five-minute video titled ‘More speech and fewer mistakes’. The specific changes affecting India’s information ecosystem:

| What changed | Details | India impact |

| Professional fact-checking ended (US) | Third-party IFCN-certified fact-checkers replaced by Community Notes | India’s 11 Meta-funded fact-check orgs (covering 15 languages) now operate under uncertainty |

| Content restrictions loosened | Immigration and gender identity topics specifically cited by Zuckerberg as over-moderated | Topics with documented communal sensitivity in India now less moderated |

| Political content limits removed | Caps on how much political content users see in feeds removed | Engagement algorithms free to amplify political content — historically linked to unrest in India |

| Trust & Safety team moved | Teams relocating from California to Texas to ‘reduce perceived bias’ | No India-specific change — but signals deprioritisation of global moderation capacity |

| Hate Speech policy rebranded | ‘Hate Speech’ renamed ‘Hateful Conduct’; more targeting of trans, immigrant communities allowed | Directly relevant to India’s minority communities; LGBTQ+ users face elevated risk |

| What did NOT change | Terrorism, CSAM, murder content still moderated; India fact-check contracts renewed for 2025 | Temporary reassurance — future uncertain beyond 2025 contract period |

The Safe Harbour Question: How Section 79 Actually Works

India’s Safe Harbour framework — Section 79 of the IT Act, 2000 — is the legal architecture that allows Meta, Google, X and every other platform to operate in India without being held responsible for every piece of user-generated content. Understanding it is essential to understanding why Meta’s moderation decisions carry legal consequences in India.

| Safe Harbour element | What it means | The Meta problem |

| Section 79(1) | Intermediary not liable for third-party content by default | Baseline protection — available as long as conditions 2-4 are met |

| Section 79(2)(c) | Must ‘observe due diligence’ per government guidelines | Meta cutting professional moderation raises questions about due diligence adequacy |

| Section 79(3)(b) — ‘actual knowledge’ | Platform loses immunity if it has knowledge of illegal content and fails to remove it | Community Notes are slower than professional fact-checkers — risks delayed ‘actual knowledge’ response |

| IT Rules 2021 — Grievance Officer | Requires resident India nodal officer, periodic compliance reports | Met by Meta — not directly affected by fact-check removal |

| IT Amendment Rules 2026 — 3-hour takedown | Court or senior government orders must be actioned within 3 hours | Meta’s reduced human moderation capacity strains ability to meet compressed timelines |

| 2026 Amendment — SGI / deepfake rules | Platforms must detect and label synthetic/AI-generated content; ‘willful blindness’ loses Safe Harbour | Meta’s Community Notes model is reactive, not proactive — potential Safe Harbour exposure |

| Proposed: Govt advisories legally binding | MeitY consultation open until April 14, 2026 — would make advisories enforceable | Non-compliance could trigger Safe Harbour loss — existential risk for Meta’s India operations |

If I, as a social media user, see an alarming post on Facebook, and there is no fact-checker to assist in identifying credible content, I am left vulnerable to being misled.”

— Senior executive, Indian fact-checking organisation (via Business Standard)

This represents the biggest existential threat many fact checkers will have to contend with.”

— Senior executive, Indian fact-checking organisation (via The Indian Express)

India’s Specific Exposure — Why This Hits Harder Here

India is not a typical market for misinformation risk — it is one of the world’s highest-stakes environments. Several documented patterns make the scaling-back of professional moderation particularly consequential:

| Risk factor | India-specific context | Historical example |

| Scale | 500M+ Meta users across Facebook, Instagram, WhatsApp, Threads | Largest single-country user base for Meta’s platforms |

| Language diversity | Content moderation needed in 15+ Indian languages; professional fact-checkers cover these | Community Notes skew toward English and high-follower accounts — rural and regional users underserved |

| Communal sensitivity | WhatsApp misinformation linked to mob violence incidents | Child abduction rumours spread via WhatsApp led to lynchings in multiple states |

| Election integrity | India conducts world’s largest democratic elections | Documented coordinated inauthentic behaviour on Meta platforms ahead of multiple state elections |

| Health misinformation | Low digital literacy in Tier 2/3 markets; high WhatsApp penetration | COVID-era health misinformation spread rapidly via WhatsApp forwards |

| Political use of platforms | Ruling and opposition parties both maintain large social media operations | IT Cell activity on Facebook/WhatsApp documented by Meta’s own transparency reports |

India’s 2026 IT Rules — The Tightening on the Other Side

While Meta scales back, India’s regulatory regime in 2026 is moving in the opposite direction. The IT (Intermediary Guidelines and Digital Media Ethics Code) Amendment Rules, 2026, represent the most significant update to India’s platform governance framework since 2021:

| 2026 Rule | Old requirement | New requirement | Impact on platforms |

| Takedown timeline (court orders) | Reasonable time | 3 hours from court order or DIG-rank govt officer | Requires 24/7 human moderation capacity |

| Emergency deepfake removal | Not specified | 2 hours for non-consensual intimate AI imagery | Proactive detection systems mandatory |

| Grievance acknowledgement | 15 days | 7 days | Faster response infrastructure needed |

| SGI / deepfake detection | Advisory | Mandatory — automated systems required | Failure = potential Safe Harbour loss |

| AI content labelling | Not required | Mandatory provenance metadata + visible labels | New technical infrastructure requirement |

| Govt advisories | Advisory / voluntary | Proposed: Legally binding (consult open Apr 14, 2026) | Non-compliance risks Safe Harbour — pending |

Who’s Affected and How

| Stakeholder | Impact | Why |

| Indian fact-check orgs (11 Meta partners) | Negative | Funding uncertainty; business model at risk; may need to cut staff |

| D2C / consumer startups on Meta ads | Negative | Brand safety risk; ads adjacent to unmoderated content; reputational exposure |

| Healthtech startups | Negative | Health misinformation harder to counter; erodes patient trust in verified health info |

| Edtech platforms | Negative | Fake ‘shortcut’ courses harder to distinguish from verified offerings |

| Political content creators | Positive (short-term) | Less moderation friction; more reach for provocative content |

| Meta’s ad revenue (India) | Positive (short-term) | More engagement = more ad impressions = higher revenue |

| Indian AI/LLM startups | Mixed | Opportunity to build India-specific moderation tools; risk if Safe Harbour erodes for them too |

| Legal/compliance sector | Positive | Growing demand for IT Act Section 79 advisory work as rules tighten |

| Indian users (rural, low-literacy) | Highly negative | Most vulnerable to misinformation; least likely to use Community Notes effectively |

Action Checklist — For Startups, Legal Teams & Platform Users

For startups dependent on Meta’s ecosystem:

- Audit Meta ad placements — activate brand safety controls to exclude sensitive content categories — immediately

- Monitor MeitY’s government advisory consultation (deadline April 14, 2026) — submit feedback on behalf of your startup’s digital safety interests

- Diversify social media marketing spend — reduce single-platform dependency on Meta given regulatory uncertainty

- Brief your legal team on IT Amendment Rules 2026 compliance obligations — 3-hour takedown timelines apply to your own platforms too if you have 5M+ Indian users

For platforms and tech companies:

- Map current Safe Harbour compliance against the 2026 Amendment Rules — identify gaps in takedown response infrastructure

- Assess deepfake / synthetic content detection capability — willful blindness forfeits Section 79 immunity

- Establish 24/7 moderation escalation protocols — 3-hour and 2-hour compliance windows require always-on capacity

- Engage IFCN-affiliated Indian fact-checking organisations directly — independent of Meta funding — to protect information quality on your platform

What’s Next

- MeitY’s April 14, 2026 consultation deadline: If government advisories become legally binding, platforms that fail to comply will face direct Safe Harbour forfeiture — the most consequential shift in India’s platform governance in a decade.

- Meta’s India fact-check contracts: Renewed for 2025, but no commitment beyond that. Indian organisations covering 15 languages are in planning mode for a funding-free future.

- Community Notes in India: Meta has not announced a timeline for Community Notes rollout in India. Given language diversity and digital literacy gaps, effectiveness in Indian contexts is deeply contested.

- Digital India Act: India’s proposed replacement for the IT Act 2000 has been in development since 2022. The Safe Harbour provisions in the new law will define the next decade of platform governance — its draft is expected before end of 2026.

- Startup opportunity: India’s fact-check funding vacuum creates a market for AI-powered, vernacular-first misinformation detection tools — an underserved space that Indian deep-tech founders could fill.